Team Members

Concep

Scopen is one of the UCSB CE 2020 capstone projects. We built this project not just for satisfying our curricular requirements, but more importantly, also for ourselves, because we always want to have an affordable, wireless, and handheld oscilloscope-like equipment, so that we can debug circuits easily.

Product Videos

If you want to checkout our full presentation video, here is the link: Scopen Presentation

Hardware

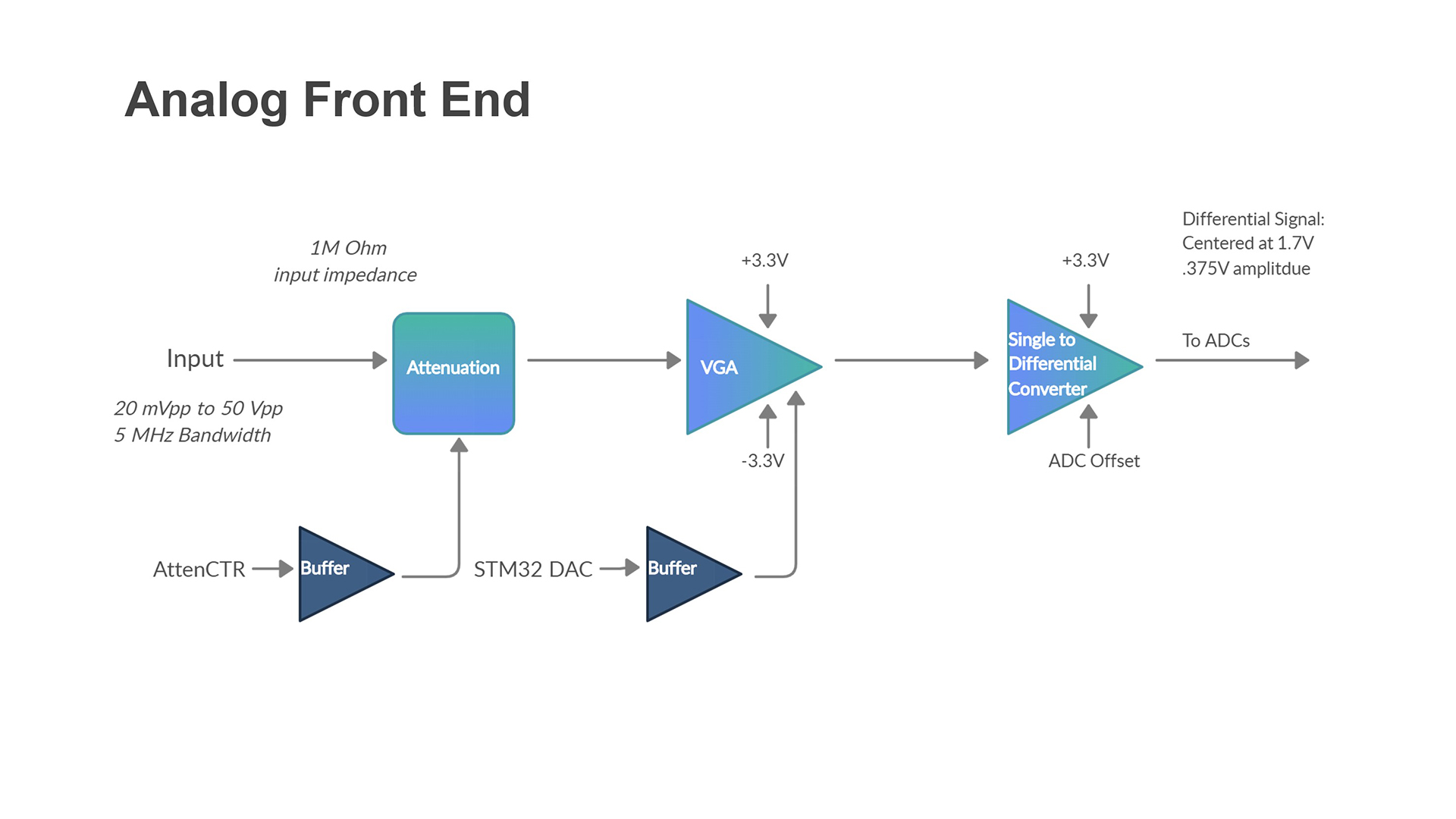

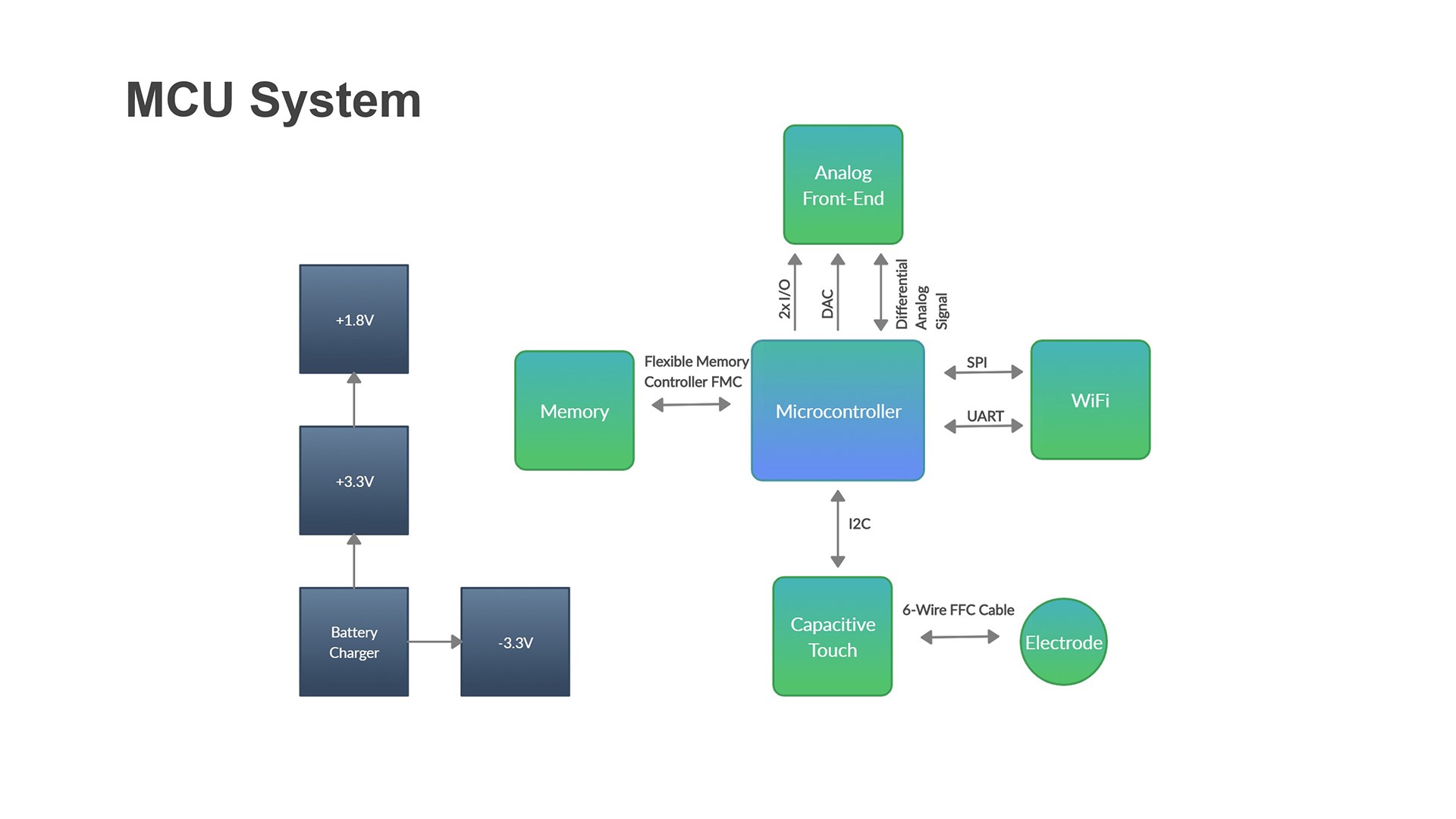

Overall, the hardware system consists of two parts, the Analog Front End (AFE), which handles isolating the scaling the input signal, and the microcontroller system (MCU), which deals with sampling the output from the AFE and managing the surrounding peripherals. In detail, the AFE consists of several stages to isolate the input signal, to scale up and down, and to make the output signal to be differential respectively. In addition to the main microcontroller, the MCU system also includes an external SRAM memory (for storing the sampled data), a touch sensor (for providing an intuitive user interaction), and a WiFi controller (for handling the communication between MCU and PC/Phone App). The architecture of the AFE and the MCU system can be seen from the following two diagrams.

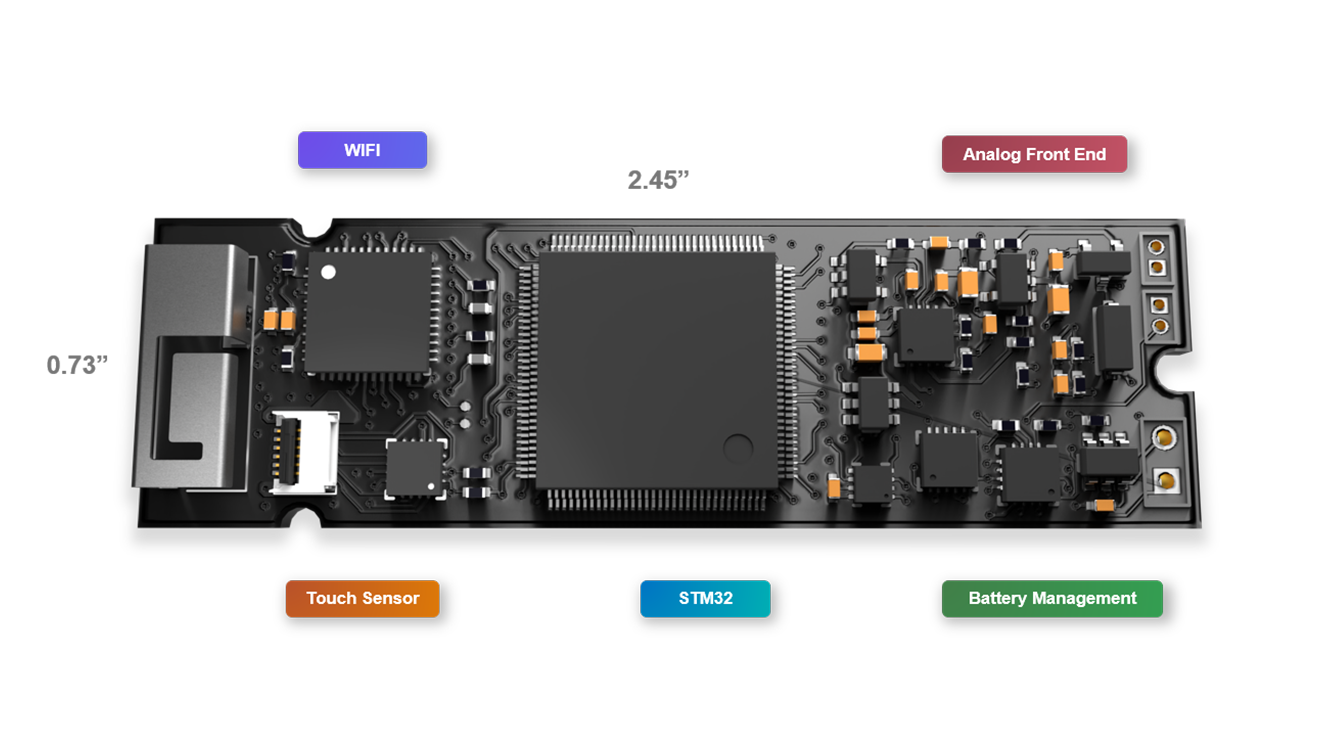

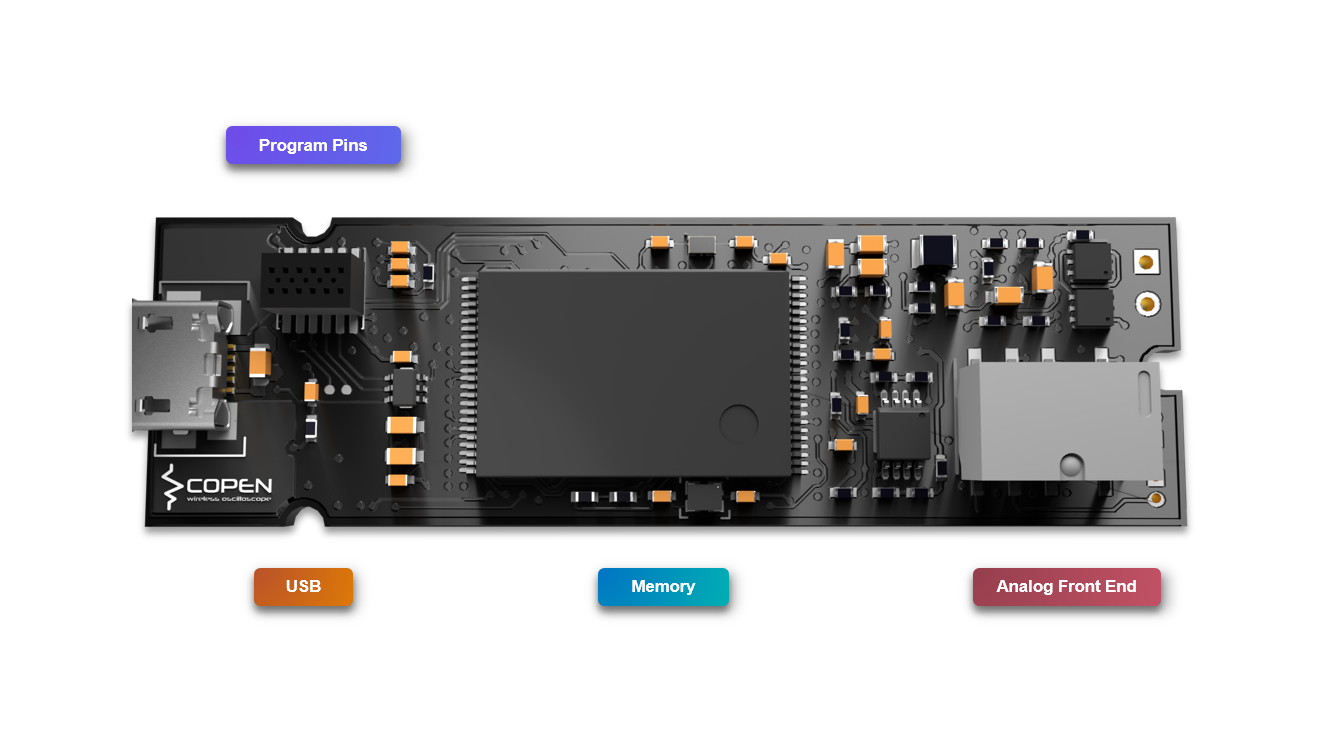

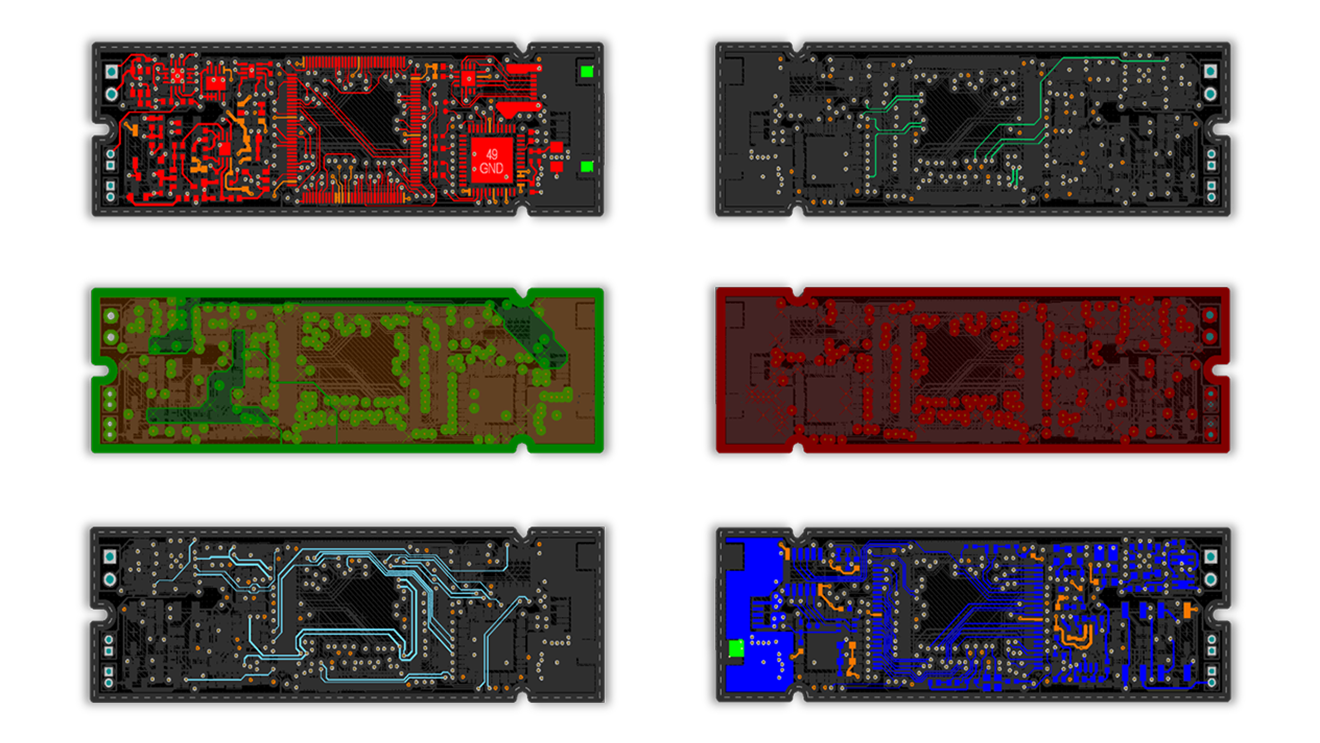

One of the challenges we were facing is how to fit all of the components in a compact board, and at the same time, make sure that we can assemble the boards by ourselves (we need to solder them by ourselves). To minimize the size of the board, we determined to place components on both sides of the PCB. Eventually, we built the whole system onto a 2.45” by 0.73”, 6-layer printed circuit board (PCB), which is even smaller than a piece of regular gum.

Here are the diagrams of all the layers.

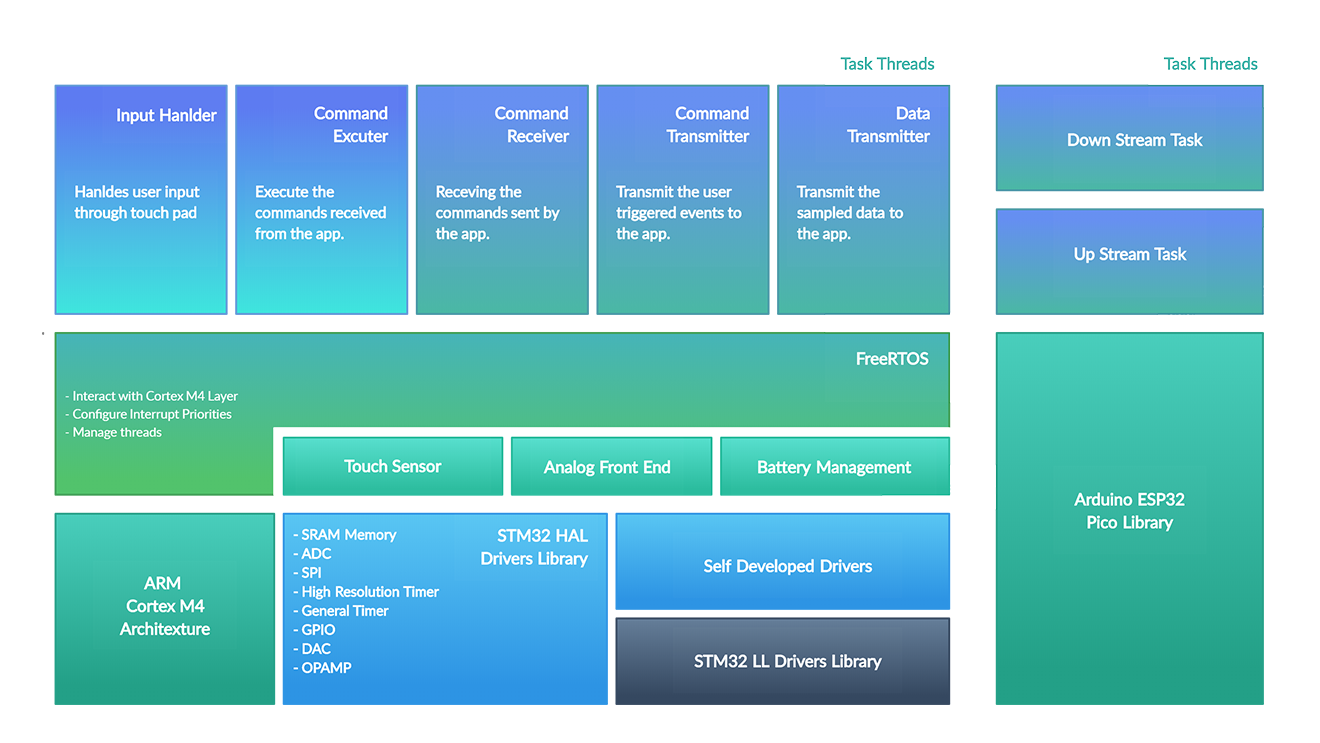

Firmware

Overall the firmware architecture can be seen from the following diagram.

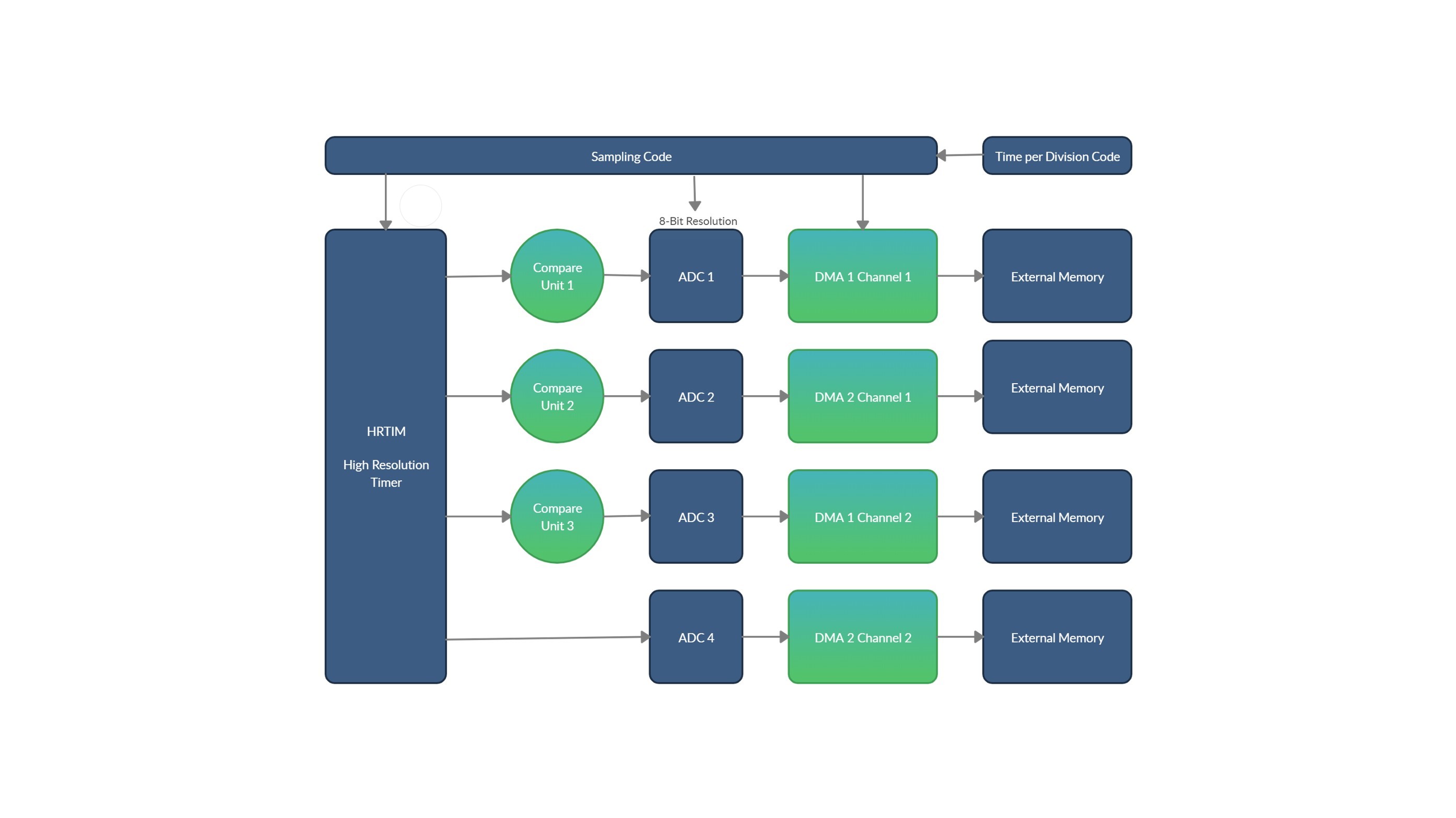

The STM32 firmware stack is on the left, and the right is the one for ESP32. For the STM32 stack, as we can see, we build our project mainly based on the STM32 HAL Library, which provides the low-level drivers to most of the internal peripherals. For those peripherals on which we need special features, we wrote our own the low-level drivers based on the STM32 LL Library, which functions as the wrapper layer of the registers. For example, we need the restart feature of I2C to communicate with the touch sensor efficiently. So we wrote our I2C library based on the STM32 LL I2C Library. One thing to notice in the middle layer is that the external sensors are driven based on the low-level drivers mentioned above. Another thing worth mentioning is that we used freeRTOS in this project because we need to let the controller run different tasks “at the same time.” On the top layer, as we can see, there are five threads in total in our final implementation. Three of them handle communication, and two of them deal with the general logic. The ESP32 stack is relatively simple because we wrote the firmware based on the Arduino Library. There are two threads in total: one for managing the downstream communication and the other one for upstream. There are several challenges we were facing while developing the firmware. The first challenge is how to trigger the ADC sampling with a fixed time interval. We cannot use interrupts if we want to make the sampling trigger time to be accurate enough, because context switching takes time, and the exact time it takes is unpredictable. Our solution is to use HRTIM (High-Resolution Timer) as the triggering source to control when the ADCs start sampling. After each sampling finishes, the DMAs will move the result from the ADC result register to the external SRAM. The following diagram demonstrates our ideas.

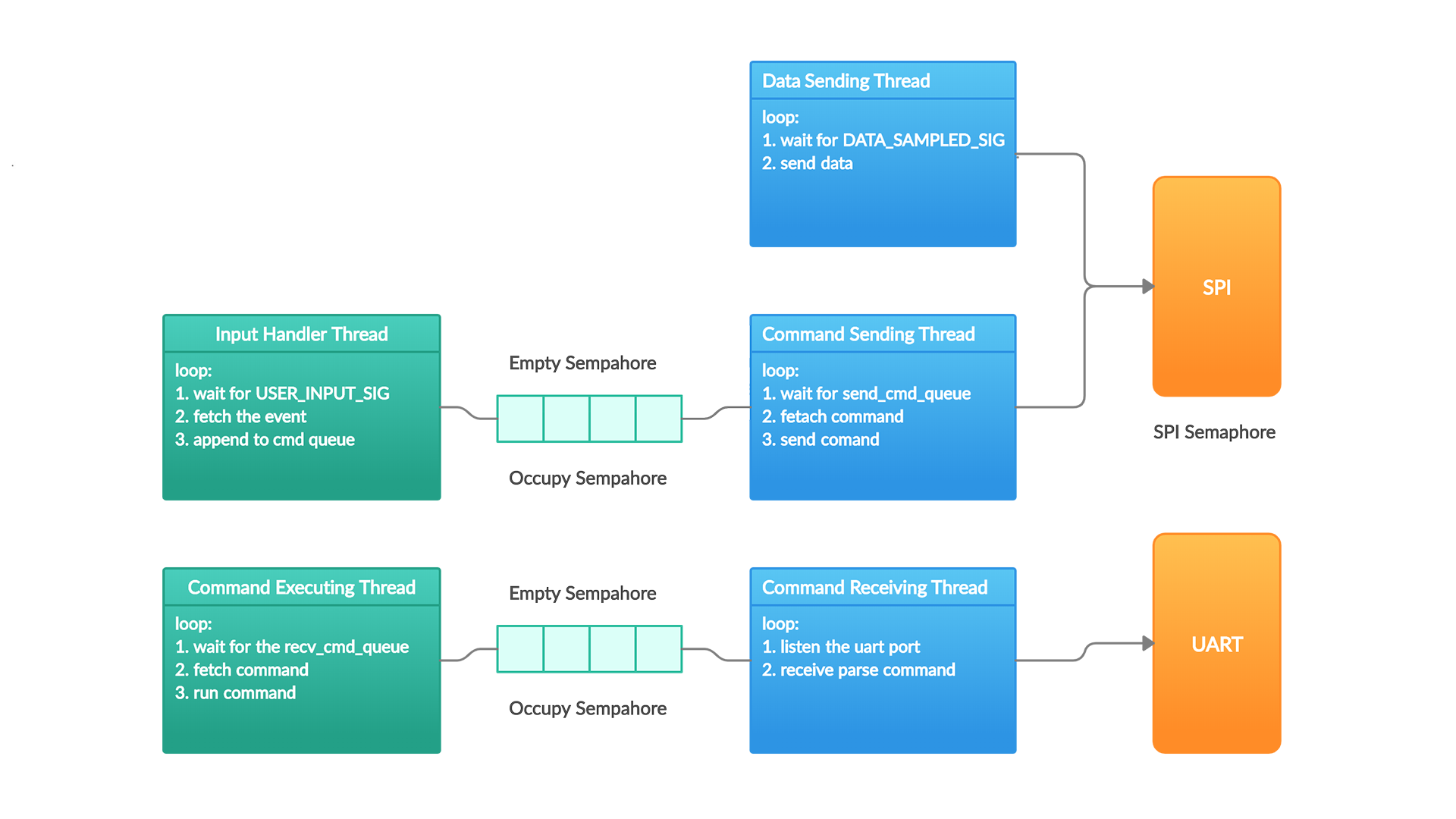

The second challenge we were facing is thread management. We used the CMSIS API based on the freeRTOS, which enables more than one thread to run on our platform. It’s also worth mentioning that we used several semaphores to avoid the race conditions introduced by this multithreading scheme: one semaphore to control the access to the SPI interface and two semaphores to indicates the empty and occupied slots for each queue.

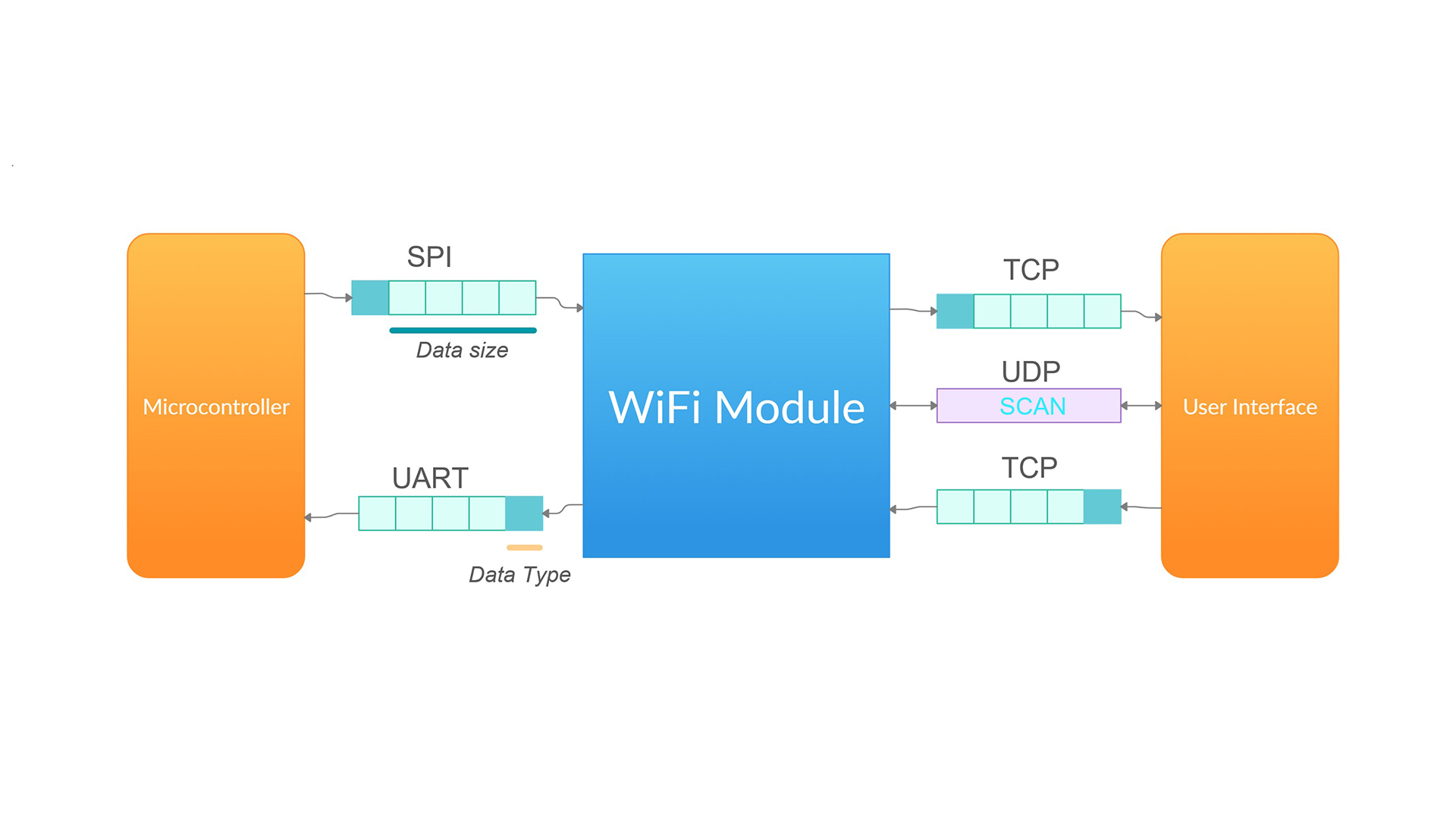

The last challenge we were dealing with is how to enable wireless communication between the pen and the PC/Mobile software. We eventually used the UDP and TCP protocols and implemented the message forwarding features on ESP32 with its Arduino library. Overall we have two threads that handle the upstream and downstream communication separately. The upstream communicate with the STM32 through the SPI interface because the upstream channel passes the sampling data, and the faster transfer speed of SPI can lessen its transfer time. The downstream communication with the STM32 is used for forwarding the user commands from the software to the pen, so a relatively slower UART interface is enough. Here is the block diagram.

Software

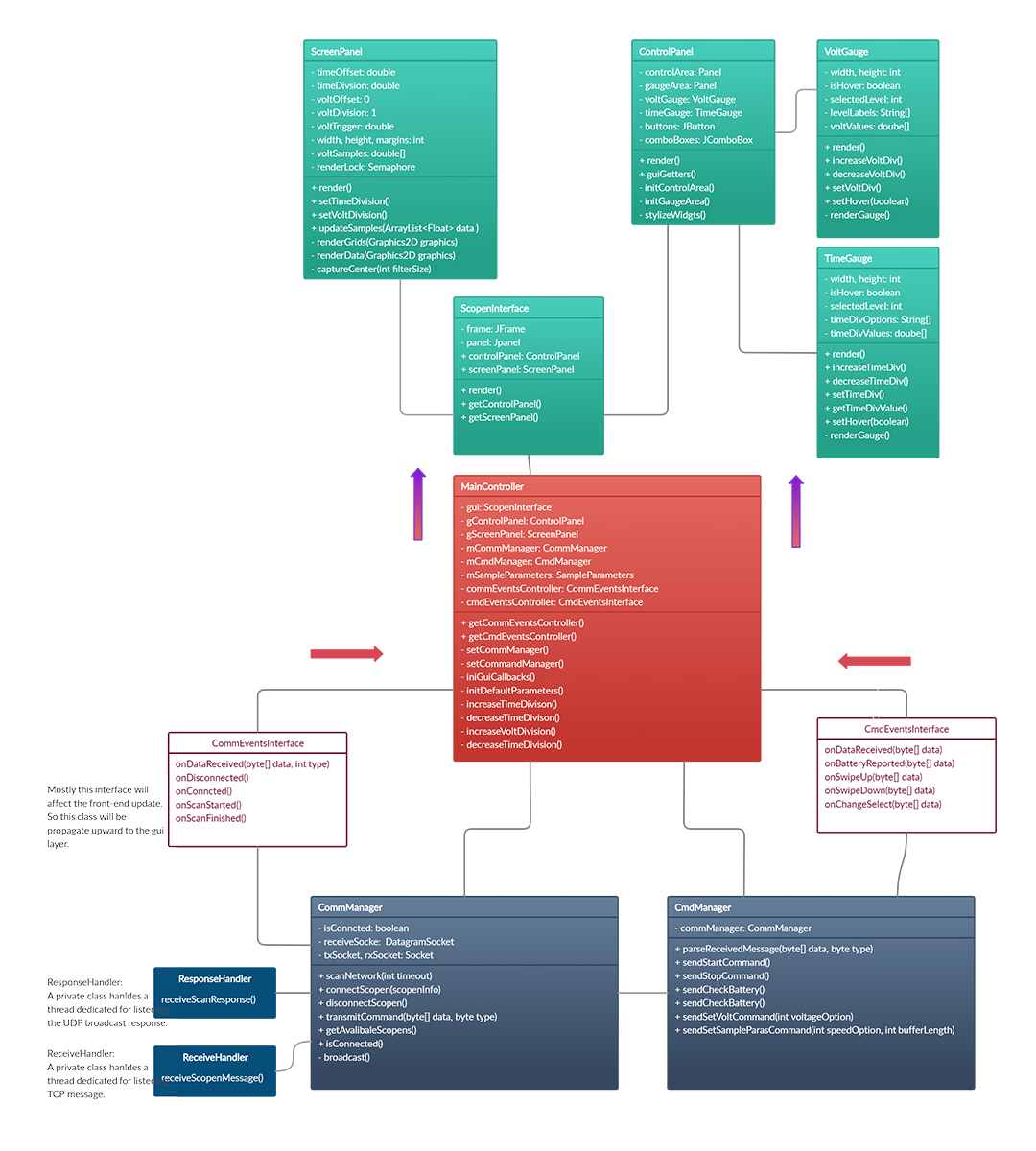

Nothing is unique about software development. The only thing worth mentioning is that we tried to follow the Model-View-Controller design pattern to develop our project. Overall the architecture is shown by the following diagram.

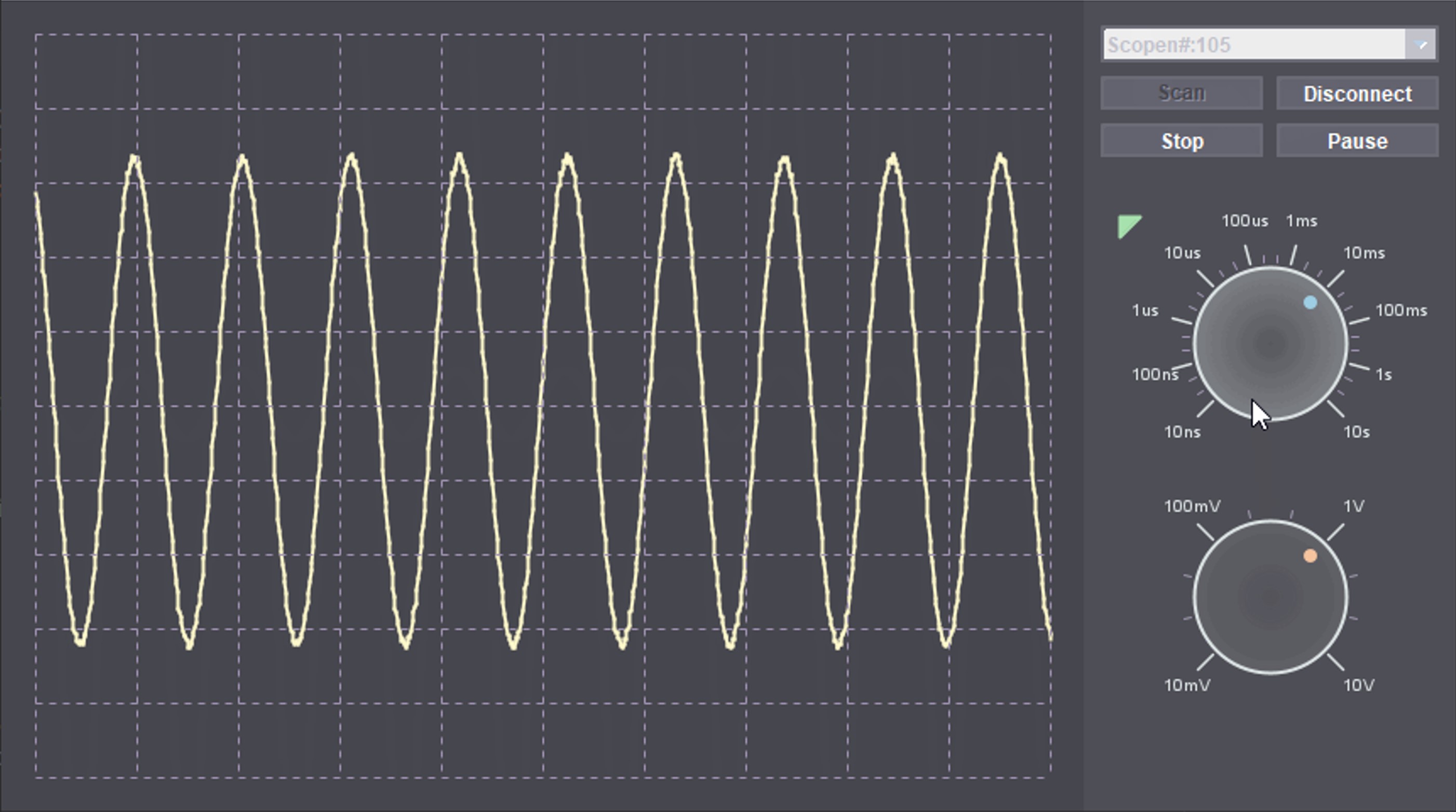

We used the Java Swing library for the front-end UI. We wrote the code to draw the nobs line by line to have an expected design. Currently, we already have an intuitive and functional design with an elegant dark theme.

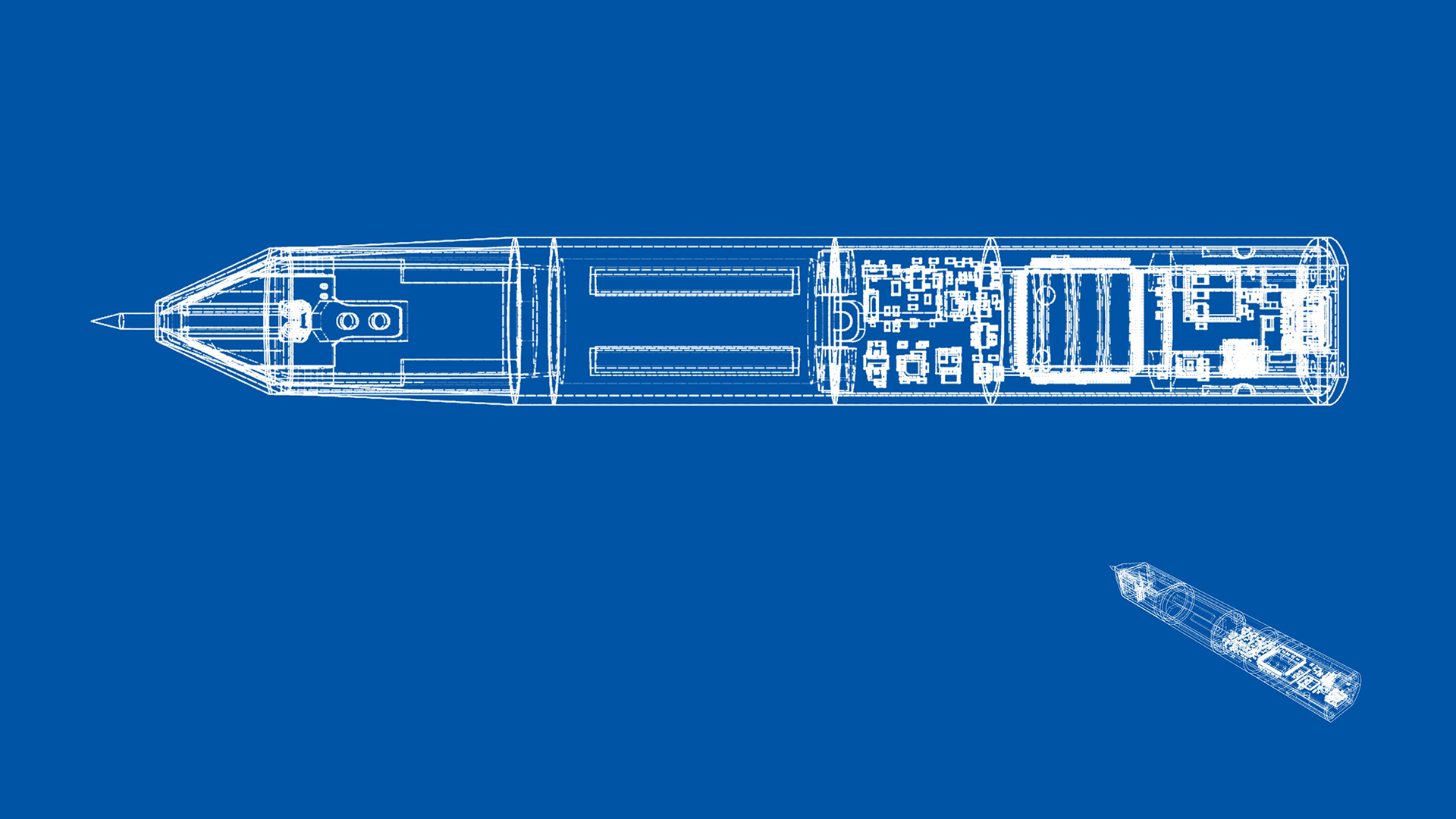

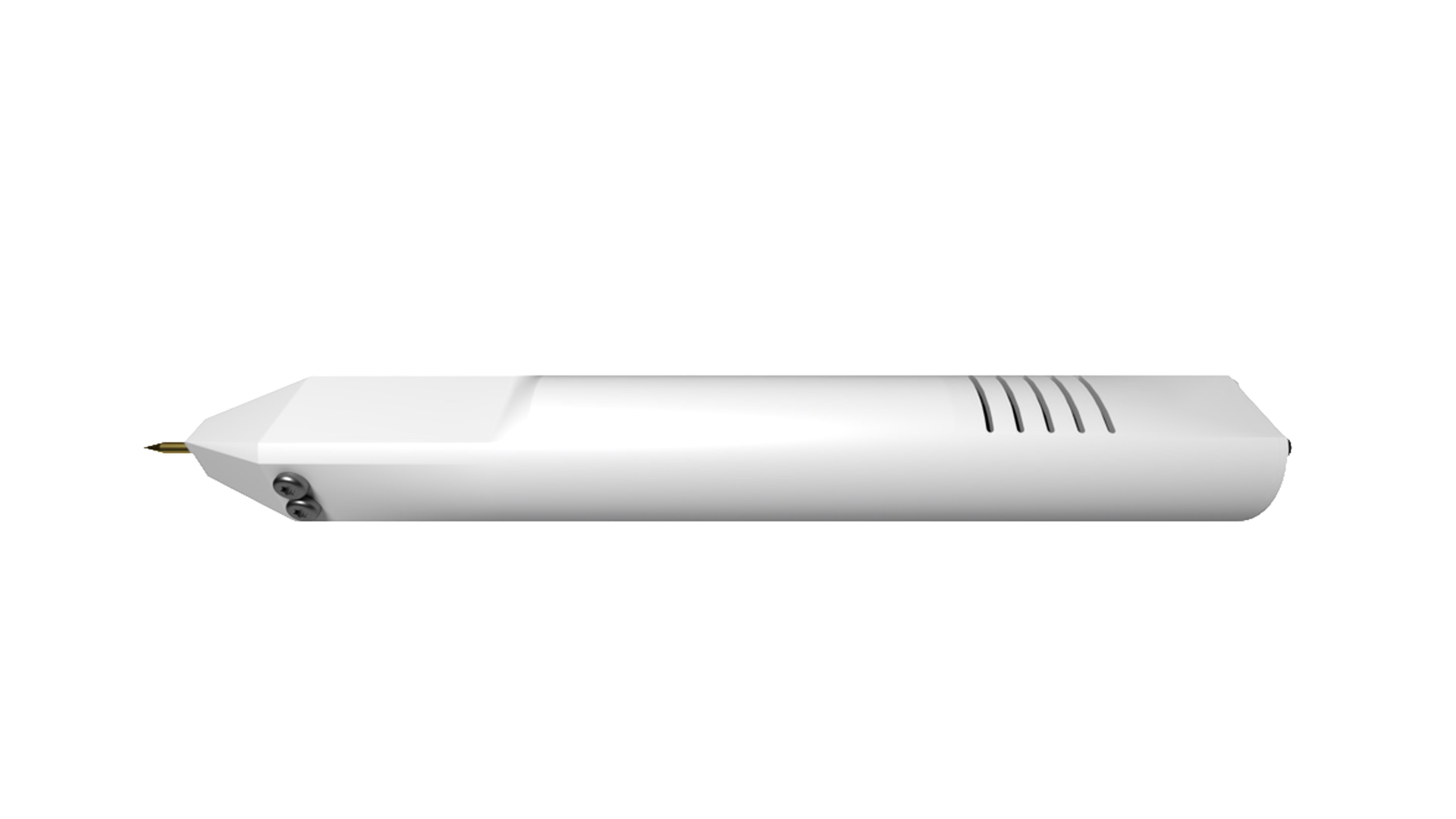

Industrial Design

We also designed a case for our product using Autodesk Fusion 360, 3D printed it and assembled everything to make it like a real product. Here are some of the screenshots.

Issues & Improvements

- We can rewrite the front-end software in JavaScript using React and Electron framework to improve the design and make it portable to different platforms.

- Current the sampling accuracy is not high and the resolution is not high enough (8 bit). We probably can tweak the parameters to sacrifice a portion of the speed to improve the accuracy, probably by enabling oversampling or simply increasing the sampling period.

- The touch sensor works from a prove-of-concept perspective but not good enough; it’s not sensitive enough to respond to the user input.

Acknowledgmen

We want to give special thanks to Prof. Yogananda Isukapalli for managing the UCSB CE capstone program. We also want to thank our TAs Kyle Douglas and Aditya Wadaskar for giving us valuable advice, and Jeff Longo for helping us develop our mobile end app.